The enactment of the Digital Personal Data Protection Act, 2023 (DPDP Act) represents a significant development in India’s legal framework governing the processing of personal data. As organisations increasingly rely on digital systems for accounting, taxation, compliance, customer management, analytics, and automation, personal data has become deeply embedded in everyday business operations. In this context, the protection of personal data is no longer a purely technological or IT-led concern, but an essential aspect of organisational responsibility and trust.

From a professional perspective, the DPDP Act requires an interpretative understanding rather than a narrow, checklist-driven approach. Its implications extend well beyond statutory compliance to areas such as corporate governance, internal controls, risk management, and audit assurance. Organisations are therefore expected to assess not only whether they comply with the letter of the law, but also whether appropriate governance frameworks, processes, and accountability mechanisms exist for responsible data handling.

This article provides an interpretative overview of the DPDP Act, 2023, and briefly explains the role of the Rules introduced in 2025, with a focus on aspects most relevant to professional and organisational practice.

Legislative Background and Scope

The DPDP Act is grounded in the Supreme Court’s recognition of the right to privacy as a fundamental right (Justice K.S. Puttaswamy v. Union of India, 2017). This landmark judgment established the constitutional basis for a comprehensive data protection regime in India, paving the way for legislation that balances individual rights with legitimate business and state interests.

Enacted in August 2023, the DPDP Act establishes a unified and nationally applicable framework for the processing of digital personal data in India. It seeks to replace fragmented and sector-specific practices with a consistent approach that applies across industries and organisational sizes.

The Act applies to:

- Processing of digital personal data within India, and

- Processing outside India where such data relates to individuals in India.

By adopting a principle-based approach, the legislature has focused on accountability and proportionality, rather than prescriptive compliance checklists. This provides organisations with flexibility in implementation, while placing the responsibility on them to demonstrate that data is handled lawfully and responsibly.

Key Concepts under the DPDP Act

The DPDP Act introduces foundational terms that carry significant governance implications and help clarify roles and responsibilities within the data ecosystem.

- Data Principal – the individual to whom personal data relates, such as customers, employees, vendors, or users.

- Data Fiduciary – the entity that determines the purpose and means of processing personal data.

This framework emphasises that organisations act as custodians of personal data, rather than owners. Personal data is therefore held in trust, and organisations are expected to exercise care, transparency, and accountability in how such data is collected, processed, and retained.

Consent and Lawful Processing

Consent forms the primary basis for lawful processing under the DPDP Act. Such consent must be free, specific, informed, and unambiguous, and must relate to a clearly defined and lawful purpose. Importantly, the Act also requires that consent be capable of being withdrawn, reinforcing individual control over personal data.

Although the Act recognises limited circumstances where processing may occur without consent—such as compliance with legal obligations—these situations are narrowly defined. Organisations must therefore design processes that ensure consent is not only obtained properly, but also documented, tracked, and honoured throughout the data lifecycle.

For professionals and organisations, this creates expectations similar to internal control documentation, where consent records, purpose limitation, and withdrawal mechanisms must be demonstrable, auditable, and consistently applied across systems.

Rights of the Data Principal

The DPDP Act grants enforceable rights to individuals, strengthening their ability to exercise control over their personal data. These include:

- The right to access information relating to personal data.

- The right to correction and erasure

- The right to grievance redressal

These rights impose operational responsibilities on organisations to maintain systems and processes that enable timely responses, ensure data accuracy, and track actions taken. Inadequate handling of such requests may indicate governance and control deficiencies and may also undermine stakeholder trust.

Significant Data Fiduciaries

Certain entities may be classified as Significant Data Fiduciaries (SDFs) based on the volume and sensitivity of personal data processed, or the potential risk posed to individuals. This classification reflects the principle that higher-risk data processing should be subject to enhanced safeguards.

SDFs are subject to additional obligations, including the appointment of a Data Protection Officer and the conduct of Data Protection Impact Assessments. These measures are intended to embed privacy considerations into organisational decision-making and to proactively identify and mitigate data-related risks.

This risk-based differentiation aligns with established governance and assurance principles and mirrors global best practices in data protection regulation.

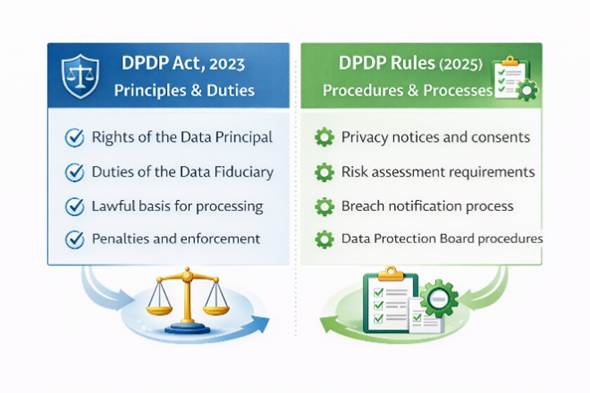

DPDP Act, 2023 and the Role of the Rules Introduced in 2025

While the DPDP Act was enacted in 2023, its implementation is supported by delegated legislation in the form of Rules. In 2025, the Government initiated the issuance of draft Digital Personal Data Protection Rules to operationalise the Act and provide procedural clarity.

It is important to clarify that:

- The DPDP Act, 2023 remains the principal law.

- The Rules do not amend or replace the Act.

- The Rules specify procedural and operational requirements for compliance.

In effect, the Act defines what must be complied with, while the Rules outline how compliance is to be achieved. This distinction is well recognised in Indian regulatory practice and helps organisations translate legal principles into practical, implementable processes.

Penalties, Governance, and Professional Implications

The DPDP Act provides for significant monetary penalties in cases of non-compliance, particularly for failures relating to data security safeguards and personal data breaches. These penalties underscore the seriousness with which data protection obligations are viewed under the law.

However, for organisations, reputational impact and stakeholder trust often present greater risk than financial penalties alone. A data protection failure can affect customer confidence, business relationships, and regulatory standing.

From a governance perspective, the Act has direct implications for:

- Internal control assessment

- Risk management frameworks.

- Vendor and outsourcing oversight

- Board and audit committee reporting.

Organisations are increasingly expected to treat data protection as a board-level governance issue rather than an isolated compliance function.

Conclusion

The Digital Personal Data Protection Act, 2023 represents a significant step towards accountable and responsible data governance in India. For organisations and professionals alike, the Act reinforces the importance of trust, transparency, and sound governance in a digital economy.

An interpretative understanding of the Act—supplemented by awareness of the evolving Rules introduced in 2025—is essential for effective implementation. Viewed holistically, DPDP compliance should be regarded not merely as a legal requirement, but as an integral component of good corporate governance and sustainable business practice.

References

Draft-Digital-Personal-Data-Protection-Rules,2025(English).pdf